The modern Security Operations Center is not besieged by attackers only, but also due to the sheer magnitude of the threat environment it has to protect. Millions of alerts every day, the shortage of skilled analysts worldwide, and stronger and stronger adversaries increasingly using AI have put conventional human-based detection methods to the test.

The solution that is coming out on the horizon of the enterprise security community is the Agentic SOC: an independent, artificial intelligence-driven operational paradigm able to identify, investigate, and respond to threats as fast as the machine itself.

This guide researches the appearance of an agentic SOC, how organizations can shift to one, and what it implies to the analysts and security leaders who have to make that change.

The Breaking Point of the Traditional SOC

The SOC has been working in a well-beaten pattern over the decades: SIME alert in, triaged by analysts, tier-2, and senior engineers to confirmed incidents. The model was geared towards a world where the threats were not coming very fast, and thus humans were able to match. That world no longer exists.

Attack chains in the context of initial access, lateral movement, and data exfiltration can be done within less than four hours. Ransomware gangs have automated their first compromise and privilege escalation pipelines. Nation-state actors wage continuous low-and-slow campaigns that create signals so insensitive that exhausted analysts would be able to notice them in a sea of false positives.

In the meantime, the cybersecurity talent gap is becoming wider. Companies all over the world have millions of vacant security jobs, and the analysts that are available are becoming costly, overworked, and hard to keep. Employing human resources in scaling detection and response cannot be economically viable or operationally efficient at the required speeds.

Such strains have formed a pushing force: security teams should not use automation and AI to supplement their productivity, but consider it a fundamental layer of operation.

What Is an Agentic SOC?

An agentic SOC substitutes or supplements human-initiated sequential labor processes with AI agents, software applications that can discern the indications of a threat. Reason about the indicators, take their own investigative measures, and execute response initiatives without a human being consenting to each choice.

The word ‘agentic’ is key. Composed security autopilot systems implement pre-written playbooks: ‘when X, then Y. ‘ Agentic systems operate dynamically, adjusting their behavior based on what they observe, and combine one or more investigative actions with subsequent ones. And make judgments regarding severity and response, just like a senior analyst, but without exhaustion.

Core Capabilities of an Agentic SOC

- Detection: Threat-based and behavioral context autonomous triage and alert prioritization.

- Investigation: Chain of investigations, querying logs, endpoint pulls, identity data correlations, no analyst advances.

- Containment: Automated containment measures, e.g., endpoint isolation, credential revocation, or network path blocking.

- Documentation: Human-readable incident summarization, Root Cause Analysis, Incident summation

- Learning: Constant self-refinement with feedback and corrections made by analysts.

This is unlike a SOAR (Security Orchestration, Automation, and Response) platform. SOAR automates log implementation, but it also decides what to investigate, how to conduct the investigation, and which response to execute. Unlike human-scripted programs that strictly follow predefined guides or scripts, humans set the policy guardrails that guide their actions.

Read More: What Is Agentic AI? Definitions And Real-World Examples

The Technology Stack Enabling Autonomous Response

The construction of an agentic SOC does not require one commercial purchase. It needs to be an integrated architecture across multiple technology domains.

Large Language Models and Reasoning Engines

LLMs are the reasoning interface that can interpret natural-language threat intelligence, comprehend the story of an attack chain, generate investigative hypotheses, and relay discoveries to analysts in natural language.

The newest generation of security-oriented LLMs can walk through alert metadata. Interpreting MITRE ATT&CK methods in context, and making risk judgments without a strict set of rules.

AI Agents with Tool Access

Agents, LLMs, or AI systems are provided the capability to take actions: they may query SIEMs, request EDR telemetry, invoke threat intelligence APIs, or run response playbooks.

The most important design idea is that agents will act on an objective, ‘decide whether this alert poses a real threat and localize it should it do so’. Moreover, independently choose and order the tools required to achieve it.

Detection Engineering and Telemetry

The quality of the agentic systems depends on the quality of the signals that they receive. Endpoints (EDR), high-fidelity telemetry, network flows, identity provider high-fidelity telemetry, and cloud infrastructure high-fidelity telemetry are necessary.

In fact, organizations transitioning to an agentic SOC often find they must first invest in telemetry coverage to bridge blind spots that human analysts previously navigated intuitively.

Decision Boundaries and Policy Guardrails

The question of self-governing responses leads to another governance low-risk situation, and can understand and execute responses (blocking an IP, quarantining a file). Furthermore, while requiring AI to be free in situations with low risk and to understand response actions (blocking an IP, quarantining a file). It requires human involvement in high-impact decision-making (isolating a critical production server, revoking a C-suite credential).

The Transition Roadmap

There is no weekend deployment involved in changing a human-led SOC into an agentic SOC. Successful organizations consider it a gradual change of operations and not a technology project.

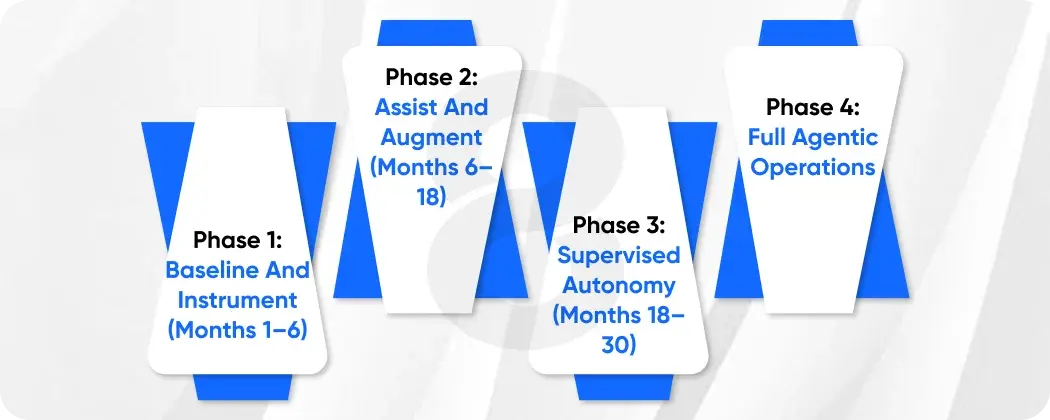

Phase 1: Baseline and Instrument (Months 1–6)

The well-labeled data of high quality before they can work independently. This stage is about auditing the telemetry coverage, enhancing the alert fidelity, and recording the playbooks. Furthermore, the analysts are already working with this, since this is the first training information and policy logic for the AI agents.

- Do a telemetry coverage evaluation of endpoints, identity, network, and cloud.

- Minimize SIEM noise: suppress known false alarms and contextualize the remaining alarms.

- Machine-readable, structured document tier-1 investigation processes.

- Set up baseline measures: mean time to detect (MTTD), mean time to respond (MTTR), and use of analysts.

Phase 2: Assist and Augment (Months 6–18)

During this stage, AI does not substitute human analysts but operates together with them. It is aimed at proving the value, establishing trust, and finding a safe place where an independent move is possible.

- Deploy AI-assisted triage: agents will first do pre-investigations of alerts and present enhanced summaries to analysts.

- Automate low-risk, high-confidence detections (known malware hashes, evident phishing links)

- Develop feedback systems to allow analysts to rectify the AI decisions. This information is gold to enhance.

- Monitor false positives and false negatives per type of detection and AI recommendation quality.

Phase 3: Supervised Autonomy (Months 18–30)

Once trust is built and models are perfected, the SOC enters supervised autonomy. Most tier-1 and tier-2 level investigations are performed by AI agents, and their results are reviewed by humans and escalated.

- Create explicit limits of autonomy: what actions of response should be approved by a human and what will be performed automatically.

- Installing the human override in real time allows the analysts to halt the autonomous response at any given moment.

- Introduce automated investigation chains into the areas of lateral movement, identity-based attacks, and cloud threats.

- Institute 24/7 independent coverage on predetermined categories of threats, lessening the overnight workload on the analyst.

Phase 4: Full Agentic Operations

AI powers the SOC as a fully agentic and operational layer, while human analysts focus on threat hunting, detection engineering, policy governance, and complex incident command. AI handles most threats at machine speed, from detection to containment.

Read More: 8 Industries Being Redefined by Computer Vision in 2026

The Human Element: Redefining the Analyst Role

The most significant effect of the Agentic SOC transition, perhaps, is its effect on people within the SOC. There are no roles of analysts that are being lost, but evolve.

The SIEM triage that took eight hours becomes an AI supervisor: checking the autonomous decisions, giving the AI corrective feedback. Also, handling the cases that the AI is truly ambiguous about. It is a more professional, interesting position, which minimizes burnout and increases retention.

The designers of the behavior of the AI are becoming senior analysts and detection engineers. They write detection logic, establish autonomy policies, and actively hunt for new threats that the AI has never encountered before. As a result, the required skill set is shifting. Instead of simply running the playbook, professionals must design and refine the systems that execute it.

The security leadership will acquire something that they could not have before, and that is ultimate coverage. AI doesn’t get tired at 3 am. It does not get distracted in the fifteenth alert. It is analytically rigorous to all signals, all the time. Organizations can actually target continuous monitoring, unlike having it as an aspirational claim.

Risks, Pitfalls, and Governance

There is a significant risk associated with autonomous AI responses in the security environments. Making such an error would not only lead to missed threats but can result in crashed production systems, breaches of privacy laws, or AI-made decisions that escalate instead of contain an incident.

Risk: Autonomous Response Gone Wrong

An AI that falsely labels an honest business operation as malicious and blocks it can do a lot of harm to its operation. Moreover, teams should construct containment guardrails conservatively and treat reversibility as a core design principle. Every automated action must leave an audit trail, and a human must be able to undo it at any point.

Risk: Adversarial Manipulation

Advanced intruders can also seek to be able to bias AI detection models by designing activities that appear to be harmless to ML systems and deliver malicious goals. Red team exercises and adversarial robustness testing against the AI itself are needed.

Risk: Over-Reliance and Skill Atrophy

If human analysts stop conducting investigations now handled by AI, they may lose the deep expertise needed to monitor systems or intervene when failures occur. To prevent this, organizations must intentionally preserve investigative skills through regular threat hunting, tabletop exercises, and red team engagements.

Governance Framework

Measuring Success

The Agentic SOC’s value must be measured rigorously. Key performance indicators include:

- Mean time to detect (MTTD): should become greatly reduced with continuous AI monitoring

- Mean time to respond (MTTR): Response time should be reduced from hours to minutes

- Analyst hours per incident: One of the indicators of AI leverage and operational efficiency

- False positive rate: AI should not be able to just make noise at machine speed

- Coverage hours: The proportion of time the SOC is fully functional in terms of detection and response

- Escalation accuracy: In what percentage of cases are AI escalations to human beings actually necessary?

In the long term, the most indicative one is adversarial dwell time, the duration of attackers in the environment before detection and containment. This should be pushed down to minutes, not days, by a successful agentic SOC.

Final Thoughts!

The Agentic SOC is not a far-off future scenario; it is a business necessity for organizations dealing with the current threat environment. AI’s scale, machine-driven response speed, and continuous coverage solve structural flaws that even large human analytics teams cannot overcome.

Therefore, the change requires time, investment in data quality, and strong governance oversight. Also, a redefinition of the human role rather than simply eliminating it.

When organizations navigate this change intelligently, they will emerge with a security posture that is intrinsically more robust. One where AI and human expertise work together in harmony, each doing what it does best. Furthermore, the question is no longer whether the Agentic SOC is imminent; your organization will either be prepared when it arrives, or your enemies will come first.