The rapid growth of corporate AI infrastructure has converted how agencies perform, compete, and innovate. However, as businesses scale their AI and gadgets and get to know infrastructure, they also face a growing project managing the environmental effects of these systems.

In 2026, ESG mandates push corporations to reconsider how they lay out, install, and optimize their MLOps infrastructure and OpenAI infrastructure. Sustainability is no longer a reporting exercise; it’s now a middle engineering responsibility.

This is where GreenOps is available.

GreenOps introduces a structured technique for constructing and coping with an organization’s AI infrastructure with carbon efficiency at its core. It aligns engineering, operations, and sustainability dreams to make sure that innovation no longer comes at the cost of environmental impact.

Understanding the Carbon Impact of Enterprise AI Infrastructure

Every layer of corporate AI infrastructure contributes to energy intake. From records pipelines to version schooling and inference, the complete lifecycle of AI and machine learning infrastructure demands compute-intensive procedures.

Most organizations underestimate how much their MLOps infrastructure contributes to carbon emissions. Continuous integration pipelines, repeated schooling cycles, and inefficient records dealing with creating hidden strength fees that accumulate over the years.

Similarly, OpenAI infrastructure utilization, mainly when scaled throughout applications, can considerably increase energy intake if not optimized. Large-version queries, redundant prompts, and needless inference calls power inefficiency.

To build sustainable systems, organizations must first understand that agency AI infrastructure is both a technological asset and an environmental duty.

Why 2026 ESG Mandates Are a Turning Point

The shift closer to ESG-driven operations forces companies to treat sustainability as a measurable outcome. Companies must now demonstrate how their AI and device learning infrastructure aligns with environmental goals.

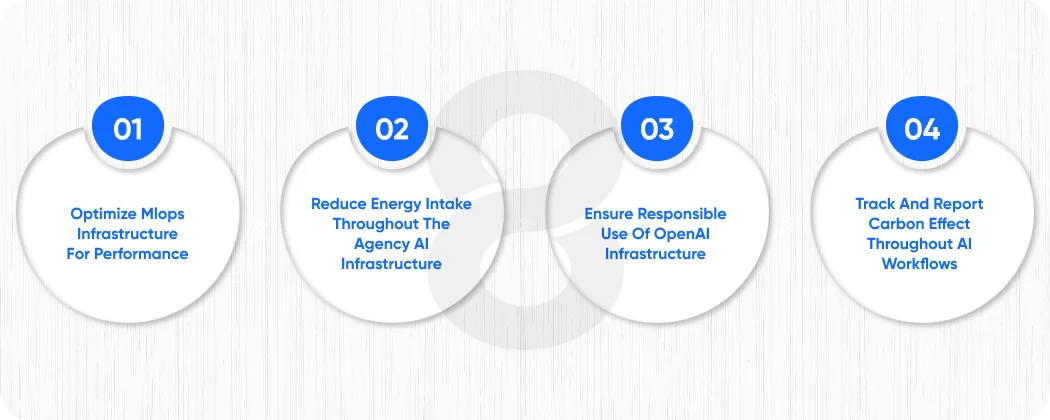

This creates direct pressure on engineering groups to:

- Optimize mlops infrastructure for performance

- Reduce energy intake throughout the agency’s AI infrastructure

- Ensure responsible use of OpenAI infrastructure

- Track and report carbon effect throughout AI workflows

In this new landscape, sustainability turns into an aggressive benefit. Organizations that construct efficient AI infrastructure advantage now not only from compliance but also from financial savings and operational agility.

Read More: How to Hire AI Developers in USA – A Strategic Approach in 2026

What Is GreenOps in Enterprise AI Infrastructure?

GreenOps is an operational framework that integrates sustainability into the lifecycle of agency AI infrastructure. It guarantees that every choice, from architecture design to deployment, considers environmental effects.

Unlike traditional optimization approaches, GreenOps focuses on balancing:

- Performance

- Cost

- Carbon efficiency

It extends deeply into MLOps infrastructure, ensuring that pipelines, workflow automation, and orchestration strategies function effectively. It additionally influences how businesses use OpenAI infrastructure, encouraging smarter and more accountable intake.

At its center, GreenOps transforms AI and system learning infrastructure into systems that are not only the most effective but also sustainable.

The global AI in ESG & Sustainability market is expected to grow from about $182 billion in 2024 to nearly $847 billion by 2032, a ~21% CAGR, driven by corporate sustainability mandates and AI‑powered reporting and optimization tools.

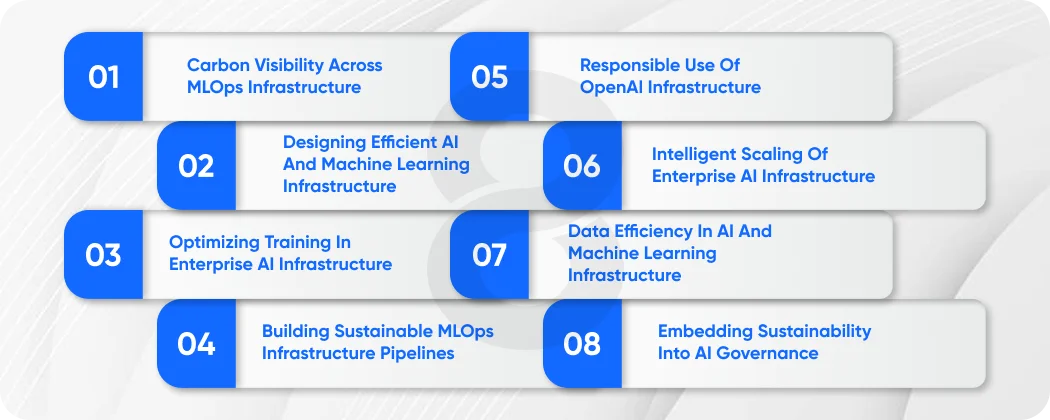

The GreenOps Blueprint for Enterprise AI Infrastructure

To efficiently lessen the carbon footprint of company AI infrastructure, companies ought to comply with a dependent technique. The GreenOps blueprint provides a realistic framework.

1. Carbon Visibility Across MLOps Infrastructure

The first step is visibility.

Without clear insights into how your MLOPs infrastructure consumes resources, you cannot optimize it. Enterprises need to put in place monitoring structures that tune energy usage throughout pipelines, schooling tactics, and deployments.

This consists of:

- Measuring compute utilization according to the model

- Tracking energy intake throughout schooling

- Monitoring inference load in production

When businesses benefit from visibility into their organization’s AI infrastructure, they release the ability to make facts-pushed sustainability decisions.

2. Designing Efficient AI and Machine Learning Infrastructure

Instead of defaulting to large fashions, groups must construct AI and device mastering infrastructure that prioritizes efficiency. Smaller, mission-particular models regularly deliver similar outcomes with substantially lower aid consumption.

Optimized structure reduces the weight on mlops infrastructure, permitting faster schooling cycles and decreasing energy usage.

This method additionally guarantees that agency AI infrastructure scales intelligently, in preference to honestly scaling compute.

3. Optimizing Training in Enterprise AI Infrastructure

Model schooling is one of the most resource-intensive procedures in business enterprise AI infrastructure.

To lessen its carbon footprint, groups need to:

- Eliminate redundant education cycles

- Reuse pre-trained models where feasible

- Optimize hyperparameter tuning strategies

- Use disbursed training successfully

By refining schooling workflows, organizations can significantly lessen the resource demands in their AI and system getting to know infrastructure.

4. Building Sustainable MLOps Infrastructure Pipelines

The shape of your MLOPs infrastructure without delay impacts sustainability.

Inefficient pipelines cause:

- Duplicate processing

- Idle compute sources

- Increased storage usage

To cope with this, companies must design MLOps infrastructure that emphasizes:

- Automation with useful resource cognizance

- Pipeline optimization

- Smart orchestration

A nicely optimized MLOps infrastructure is not only reduces emissions but also improves deployment pace and reliability.

5. Responsible Use of OpenAI Infrastructure

Many corporations now depend on OpenAI infrastructure to strengthen AI-driven applications. While powerful, it must be used effectively.

Key strategies encompass:

- Reducing useless API calls

- Optimizing active structures

- Implementing caching mechanisms

- Selecting suitable model sizes

When corporations treat OpenAI infrastructure as a shared resource that calls for optimization, they lessen both charges and environmental impact.

6. Intelligent Scaling of Enterprise AI Infrastructure

Scaling without management leads to inefficiency. GreenOps promotes intelligent scaling strategies where agency AI infrastructure expands based on real demand in preference to expected load.

This consists of:

- Auto-scaling structures

- Serverless architectures

- Demand-based resource allocation

These strategies ensure that AI and gadgets gaining knowledge of infrastructure makes use of only the resources they need.

7. Data Efficiency in AI and Machine Learning Infrastructure

Data is the foundation of AI and gadget-mastering infrastructure, but it also contributes to energy intake.

To optimize records usage:

- Reduce duplication

- Implement efficient garage strategies

- Archive unused datasets

- Optimize statistics pipelines

Efficient statistics handling improves the performance of MLOps infrastructure while lowering environmental effect.

8. Embedding Sustainability into AI Governance

Sustainability should be built into governance frameworks. Enterprises should set up regulations that make certain:

- Carbon-aware development practices

- Responsible use of OpenAI infrastructure

- Continuous optimization of mlops infrastructure

- Transparent reporting of environmental impact

Governance guarantees that sustainability turns into long-term functionality within the agency’s AI infrastructure.

Read More: AI FinOps 2026 – How to Predict and Manage the “Token Tax” in High-Scale Generative AI Applications

Common Challenges in Green AI Transformation

Implementing GreenOps inside enterprise AI infrastructure calls for extra than purpose; it demands structural, cultural, and technical transformation. Many agencies battle because they deal with sustainability as an add-on rather than embedding it into their MLOPs AI and machine learning infrastructure.

1. Limited Visibility Across Enterprise AI Infrastructure

Most establishments lack granular visibility into how their enterprise AI infrastructure consumes electricity. While teams screen overall performance and value, they not often track carbon effect across training, inference, and records pipelines.

Without deep observability:

- Inefficient workloads go ignored

- Redundant strategies persist in mlops infrastructure

- OpenAI utilization scales without optimization

To clear this up, organizations should combine carbon observability immediately into their AI and system-mastering infrastructure, making sure every pipeline and workload will become measurable.

2. Inefficient Legacy MLOps Infrastructure

Legacy MLOps infrastructure is frequently based on old workflows, static pipelines, and guide interventions. These systems were no longer designed for sustainability or performance.

Common troubles include:

- Over-provisioned compute resources

- Repeated model training cycles

- Lack of automation

- Poor useful resource orchestration

Modernizing MLOps infrastructure is crucial. Without it, even the most superior agency AI infrastructure will continue to waste energy and increase emissions.

3. Over-Reliance on Large Models in OpenAI Infrastructure

Many corporations default to large, popular-cause models, while the usage of OpenAI infrastructure, even when smaller in scale, can gain similar consequences.

This leads to:

- Higher inference costs

- Increased electricity intake

- Slower reaction instances

A GreenOps-driven approach encourages:

- Model proper sizing.

- Task-precise optimization

- Efficient spark off engineering

Optimizing OpenAI infrastructure usage turns into one of the fastest approaches to lessen the carbon footprint of an organization’s AI infrastructure.

4. Organizational Silos Between Engineering and Sustainability Teams

In many firms, sustainability teams perform one at a time from engineering teams handling AI and device learning. This disconnect creates:

- Misaligned priorities

- Lack of actionable insights

- Inefficient ESG reporting

GreenOps bridges this gap through embedding sustainability metrics directly into ML/DL infrastructure, making it part of each day engineering workflows in preference to a separate reporting function.

5. Lack of Standardized Metrics for AI Sustainability

Unlike fee and performance, sustainability lacks standardized metrics throughout organisation’s AI infrastructure.

Organizations struggle to reply:

- How plenty carbon does a model generate?

- What is the energy value according to inference?

- How green is our mlops infrastructure?

Establishing inner benchmarks and standardized KPIs is vital for scaling sustainable AI and system mastering infrastructure.

6. Resistance to Change in Enterprise AI Infrastructure

Engineering groups frequently prioritize velocity and performance over sustainability. Introducing GreenOps practices may also first of all slow down workflows or require new tooling.

However, companies that conquer this resistance gain from:

- Long-term efficiency

- Lower operational charges

- Improved gadget reliability

Adopting GreenOps calls for management alignment and a clear roadmap for reworking employer AI infrastructure.

Read More: What Is Agentic AI? Definitions And Real-World Examples

Advanced Strategies to Overcome GreenOps Challenges

To pass these challenges, organizations must undertake advanced techniques that deeply combine sustainability into MLOPs infrastructure and AI and machine learning infrastructure.

1. Carbon-Aware Workload Scheduling

Modern organization AI infrastructure can dynamically schedule workloads primarily based on electricity efficiency. This consists of:

- Running schooling jobs in the course of low-energy-demand periods

- Shifting workloads to regions with purifier strength

- Prioritizing low-carbon compute sources

Carbon-aware scheduling transforms MLOPs infrastructure into a smart device that actively reduces emissions.

2. AI Model Lifecycle Optimization

Instead of focusing only on education, organizations need to optimize the whole lifecycle of models within AI and deep learning infrastructure. Key enhancements include:

- Extending model lifespan

- Reducing retraining frequency

- Continuously optimizing model performance

Lifecycle optimization reduces the load on an organization’s AI infrastructure, reducing each price and carbon output.

3. Intelligent Resource Allocation in MLOps Infrastructure

Advanced orchestration tools enable MLOPs infrastructure to allocate assets dynamically, primarily based on workload requirements.

Benefits encompass:

- Reduced idle compute

- Improved utilization costs

- Lower electricity intake

This ensures that employer AI infrastructure operates at peak efficiency without useless resource utilization.

4. Hybrid AI Architecture with OpenAI Infrastructure

Enterprises can combine internal fashions with OpenAI infrastructure to optimize performance.

For example:

- Use inner functions for simple responsibilities

- Use OpenAI infrastructure for the simplest and most complicated queries

- Route requests dynamically based on workload

This hybrid technique reduces dependency on excessive-price, high-energy operations at the same time as maintaining overall performance.

5. Continuous Optimization Through Feedback Loops

GreenOps is not a one-time implementation; it requires non-stop development.

Organizations ought to:

- Monitor mlops infrastructure overall performance

- Analyze power intake trends

- Optimize pipelines iteratively

Feedback loops ensure that business enterprise AI infrastructure evolves toward extra performance over the years.

Building a GreenOps-First Enterprise Culture

By itself can’t clear up sustainability challenges. Organizations have to construct a culture that prioritizes GreenOps across all levels of agency AI infrastructure.

Leadership Alignment

Executives must deal with sustainability as a core business objective. This guarantees that investments in MLOPs infrastructure and AI and device studying infrastructure align with ESG dreams.

Engineering Ownership

Developers and facts engineers must take responsibility for optimizing business enterprise AI infrastructure.

This consists of:

- Writing efficient code

- Reducing unnecessary computations

- Optimizing openai infrastructure usage

Cross-Functional Collaboration

Sustainability, engineering, and operations groups ought to collaborate intently to make sure that MLOPs infrastructure helps ESG goals.

Read More: 20 Best AI Apps for Android to Build Profitable Products

Future Trends in Sustainable Enterprise AI Infrastructure

The evolution of organizational AI infrastructure might be driven by using sustainability-targeted innovation.

1. Carbon-Native AI Platforms

Future systems will embed carbon monitoring without delay into AI and machine learning infrastructure, allowing real-time optimization.

2. Autonomous MLOps Infrastructure

AI-driven automation will optimize MLOps infrastructure without manual intervention, ensuring continuous efficiency upgrades.

3. Smarter OpenAI Infrastructure Utilization

Organizations will adopt shrewd routing structures that reduce pointless usage of OpenAI infrastructure.

4. ESG-Integrated AI Governance

Sustainability metrics will become a middle aspect of governance frameworks within a company’s AI infrastructure.

5. Energy-Efficient AI Models

New version architectures will prioritize performance, reducing the overall effect of AI and devices gaining knowledge of the infrastructure.

The AI data center market, infrastructure built specifically for AI workloads, is forecast to expand from roughly $236 billion in 2025 to nearly $934 billion by 2030, with a 31.6% CAGR reflecting strong enterprise and cloud AI demand.

Final Thoughts: From Optimization to Transformation

Reducing the carbon footprint of agency AI infrastructure is not just about optimization; it’s far more about transformation. Organizations should reconsider how they design, deploy, and scale their MLOps infrastructure, ensuring that sustainability becomes a fundamental precept of AI and machine learning infrastructure. By hiring skilled machine learning engineers who can build energy-efficient models, optimize resource usage, and implement GreenOps best practices from the ground up.

By adopting GreenOps and optimizing OpenAI infrastructure, firms can build green and scalable structures, reduce operational costs, meet ESG mandates, and strengthen their competitive function. The organizations that lead this modification will no longer simply meet 2026 requirements but may also define the future of sustainable innovation.