Most businesses still talk about AI ownership in incomplete terms. They think owning AI means subscribing to a model provider, integrating a few APIs, adding automation into workflows, and maybe fine-tuning a system on proprietary data. Technically, that may count as “using AI,” but it does not mean the organization actually controls anything meaningful.

Now the business has to think about where the data lives, where model training occurs, where inference happens, who controls the infrastructure, what jurisdictions can access the systems, what vendors create dependency risk, and what happens if regulations suddenly shift around privacy, residency, or national data sovereignty.

That is exactly why sovereign AI is becoming one of the most serious strategic conversations in enterprise technology. And at the center of that conversation is ownership of the entire AI Lifecycle.

This is where the sovereign AI stack enters the picture.

What Is a Sovereign AI Stack?

A sovereign AI stack refers to an AI infrastructure architecture where an organization controls every critical layer of the AI Lifecycle within defined geographic, legal, and operational boundaries.

That includes control over:

- Data storage and residency

- Model training environments

- Compute infrastructure

- Inference environments

- Security and governance layers

- Deployment architecture

- Vendor dependencies

- Compliance and audit controls

The goal is not simply technical ownership. The goal is strategic, regulatory, and operational control.

Because if sensitive data leaves your jurisdiction during model training or inference, sovereignty is already compromised, regardless of how secure the vendor claims to be.

Read More: AGI vs AI – Which Technology Drives Better Business Automation?

Why Sovereign AI Matters More in 2026 Than It Did Before

A few years ago, most organizations were comfortable experimenting with cloud-hosted AI APIs. That comfort is disappearing. Why? Because businesses increasingly realize that AI systems are no longer peripheral tools. They are becoming embedded into core operations, customer systems, internal decision-making, compliance workflows, and intellectual property generation. That creates several immediate concerns:

Regulatory Pressure Is Increasing

Governments worldwide continue introducing stricter data residency and AI governance requirements.

Vendor Dependency Is Becoming Riskier

Building critical infrastructure entirely on external AI providers creates long-term strategic fragility.

Sensitive Data Cannot Always Leave Jurisdiction

Healthcare, finance, defense, public sector, and infrastructure organizations often cannot legally or strategically process data offshore.

AI Infrastructure Is Becoming Competitive Advantage

Organizations increasingly want proprietary ownership of the full AI Lifecycle rather than renting access indefinitely.

The Core Layers of the Sovereign AI Stack

Owning the AI Lifecycle requires more than self-hosting a model. It requires controlling every major operational layer.

Layer |

What It Includes |

Why It Matters |

| Data Layer | Collection, storage, pipelines, governance | Prevents unauthorized residency violations |

| Training Layer | Model training infrastructure | Maintains sovereignty during model creation |

| Compute Layer | GPUs, servers, orchestration | Controls infrastructure dependency |

| Inference Layer | Runtime environments | Protects live operational data |

| Security Layer | Encryption, IAM, audit systems | Secures sovereign boundaries |

| Governance Layer | Policy, monitoring, compliance | Maintains legal/regulatory alignment |

Read More: 10 Best AI Development Companies In The US

Where Most “Private AI” Strategies Quietly Fall Apart

A lot of companies believe they are building sovereign AI when they are not. They may host their application internally while still relying on external inference APIs. It may store data locally while training models in foreign cloud regions. They may fine-tune proprietary models but use third-party infrastructure for deployment. That is not sovereignty. That is partial localization. And partial localization still leaves gaps across the AI Lifecycle.

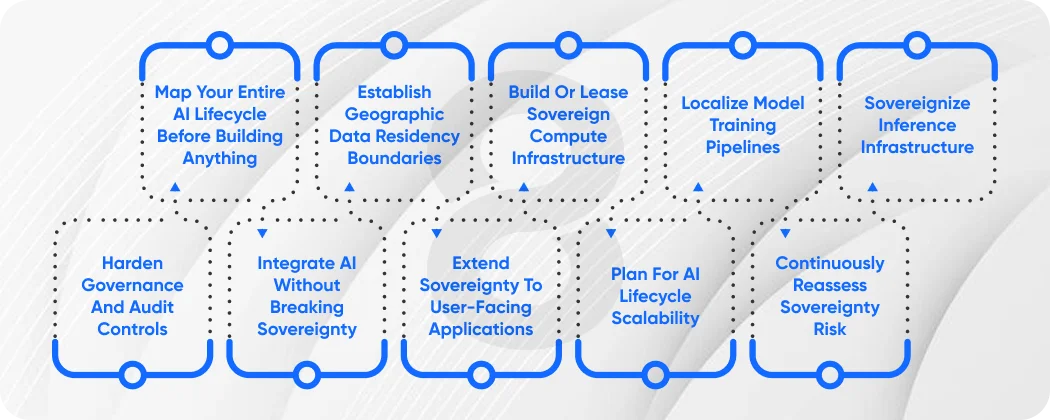

Step 1: Map Your Entire AI Lifecycle Before Building Anything

Most sovereignty initiatives fail because companies jump into infrastructure procurement before understanding their real operational architecture.

Before building a sovereign stack, map:

- Where data enters systems

- Where data is stored

- Where preprocessing occurs

- Where training occurs

- Where inference occurs

- Where logs and telemetry are stored

- Which vendors touch the pipeline

- Which jurisdictions affect each stage

This creates visibility into your actual AI Lifecycle exposure. Without this map, sovereignty planning becomes guesswork.

Step 2: Establish Geographic Data Residency Boundaries

Define exactly where data is legally and operationally allowed to exist.

That includes:

- Raw training data

- Processed datasets

- Model weights

- Fine-tuned checkpoints

- Inference logs

- User prompts

- Generated outputs

This matters because sovereignty breaks the moment critical data crosses unauthorized borders during any part of the AI Lifecycle.

Step 3: Build or Lease Sovereign Compute Infrastructure

True sovereignty requires jurisdiction-controlled compute. Organizations typically choose one of three models:

- Fully Owned Infrastructure: Maximum control, highest capital cost

- Sovereign Private Cloud: Dedicated regional infrastructure under compliant providers

- National/Regional Sovereign AI Platforms: Government-approved or jurisdiction-certified environments

This is where serious software development planning becomes necessary because infrastructure design directly affects scalability and operational efficiency.

Step 4: Localize Model Training Pipelines

Training is one of the most overlooked sovereignty risks. Many organizations keep data local but export datasets to foreign clouds for training because it feels operationally easier. That immediately compromises the AI Lifecycle.

Training pipelines should remain inside sovereign boundaries, including:

- Dataset preprocessing

- Fine-tuning jobs

- Hyperparameter optimization

- Model checkpoint storage

- Evaluation workflows

Step 5: Sovereignize Inference Infrastructure

Inference is where live business data flows continuously. That makes inference sovereignty arguably more important than training sovereignty.

Inference environments must keep:

- Prompt data local

- Runtime processing local

- Output generation local

- Telemetry local

This is particularly important for organizations deploying enterprise app development initiatives with embedded AI features. Because if enterprise systems call offshore AI endpoints, sovereignty disappears instantly.

Step 6: Harden Governance and Audit Controls

Sovereignty without governance is incomplete.

Organizations need governance layers covering:

- Access control policies

- Audit logging

- Infrastructure monitoring

- Data movement tracking

- Model lineage documentation

- Regulatory reporting frameworks

Strong governance ensures the AI Lifecycle remains compliant over time rather than only at deployment.

Step 7: Integrate AI Without Breaking Sovereignty

This is where many companies struggle. They build sovereign AI infrastructure, then ruin it during integration. Improper AI integration often reconnects sovereign systems to non-sovereign APIs, third-party analytics platforms, or foreign SaaS layers. Every integration point must be audited carefully. Because sovereignty is only as strong as the weakest link in the stack.

Step 8: Extend Sovereignty to User-Facing Applications

Applications consuming AI must also remain within architectural boundaries.

That includes:

- Internal dashboards

- Web platforms

- Mobile products

- Customer-facing portals

- Admin interfaces

Whether you are doing mobile app development or enterprise platform deployment, application architecture must preserve sovereign routing and data locality.

Step 9: Plan for AI Lifecycle Scalability

Sovereignty cannot destroy scalability.The stack must support:

- Increasing inference demand

- Larger training datasets

- New model deployment cycles

- Multi-region sovereign expansion

- Future compliance changes

Scalable architecture ensures the AI Lifecycle remains sustainable as adoption grows.

Step 10: Continuously Reassess Sovereignty Risk

Sovereignty is not static.

Risks evolve because:

- Vendors change infrastructure

- Regulations change

- Cloud providers alter regions

- Integrations expand

- Internal teams add tools

That means sovereignty requires recurring review.

Read More: How to Hire AI Developers in USA – A Strategic Approach in 2026

Sovereign AI Stack vs Traditional Cloud AI

Factor |

Sovereign AI Stack |

Traditional Cloud AI |

| Data Control | Full | Limited |

| Regulatory Alignment | Stronger | Variable |

| Vendor Dependency | Lower | Higher |

| Customization | Greater | Moderate |

| Upfront Cost | Higher | Lower |

| Strategic Control | Maximum | Limited |

How AI Development Fits Into Sovereign Architecture

Sovereignty does not eliminate innovation. It simply changes the deployment strategy. Strong AI development practices inside sovereign environments allow organizations to:

- Build proprietary internal models

- Fine-tune securely on private data

- Develop domain-specific intelligence systems

- Maintain innovation without external dependency

That transforms sovereignty from a compliance burden into a strategic advantage.

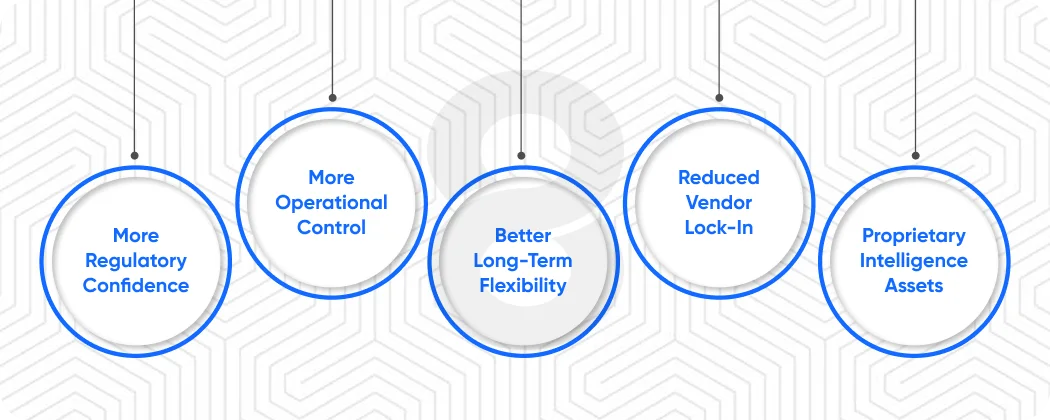

If AI Is Becoming Core Infrastructure, Ownership Matters

If AI is becoming embedded into your business operations, internal systems, products, or customer experience, then infrastructure ownership stops being an optional strategy and starts becoming an operational necessity. Because once critical intelligence workflows depend entirely on external infrastructure, your business inherits every risk attached to that dependency.

A properly designed sovereign stack gives your organization:

- More regulatory confidence

- More operational control

- Better long-term flexibility

- Reduced vendor lock-in

- Greater protection of proprietary intelligence assets

If your organization plans to scale AI seriously, controlling the AI Lifecycle early is usually much easier than rebuilding for sovereignty later.

Common Mistakes Businesses Make With Sovereign AI

- Confusing Private Hosting With Sovereignty: Private hosting alone does not equal sovereign control

- Ignoring Inference Residency: Inference often leaks more sensitive data than training

- Underestimating Integration Risk: Third-party connectors quietly break sovereignty often

- Treating Sovereignty As Pure Compliance: It is strategic infrastructure planning, not just legal defense

Final Thoughts

The organizations that will lead in AI over the next decade are not simply the ones using the most advanced models. They will be the organizations that control the infrastructure, data, deployment environments, and governance frameworks behind those models. That means controlling the entire AI Lifecycle.

Because sovereign AI is not just about where your servers sit, it is about whether your organization truly owns the intelligence infrastructure, becoming central to its future. And as AI becomes more deeply integrated into business operations, ownership will matter more than convenience.

The companies that understand this early will build stronger systems, protect more value, and maintain far more strategic flexibility than those who outsource critical intelligence infrastructure too casually.