Everyone jumped into AI thinking it would just figure everything out automatically if you feed it enough data, like more data equals better decisions, simple equation, sounds convincing. And honestly, at first it does feel like that. You run a model, you see outputs, and you’re like okay this is working, this is smart, this is useful.

Then you try to actually use it inside real workflows. Not demos, not test prompts, real messy business processes where things are not clean, not structured, not perfect. And suddenly things start feeling slightly off. Not completely wrong, just small weird gaps. Outputs that look right but don’t fit. Decisions that ignore rules everyone already knows.

That’s where trust slowly disappears. Nobody announces it, nobody says “this AI is bad,” people just stop using it quietly.

And this is exactly where Neuro-Symbolic AI starts making sense. It doesn’t just rely on patterns, it brings logic into the picture. Not just prediction, but reasoning. Not just “this looks right,” but “this is right because these rules apply.”

That shift is small when you read it, but huge when you see it working.

What is Neuro-Symbolic AI

Think about it like this, you have two very different ways machines try to act smart.

Neural systems are flexible. They learn from data, patterns, examples, correlations. They don’t need rules, they just observe and adapt. That’s why they feel powerful.

Symbolic systems are structured. They follow rules, logic, constraints. If this then that. Clear, predictable, explainable. That’s why they feel safe but rigid.

Now combine both into one system. That’s Neuro-Symbolic AI.It learns from data but also respects rules. It adapts but also validates. It generates outputs but also checks if those outputs make sense within a logical framework. So instead of guessing better, it starts thinking better.

Read More: How to Build a “Digital Workforce” of Specialized AI Agents for Supply Chain Automation

Why Traditional AI Starts Breaking in Real Work

You’ve probably seen this pattern already. Everything looks perfect in a demo. Then it goes into production, and small cracks start showing up.

- Outputs feel slightly misaligned with business logic

- Rules get ignored even when they are obvious

- The same input gives slightly different outputs every time

- Teams stop trusting it and start double-checking everything

That last part is where everything collapses. Because if humans are still verifying everything, then what did we automate? Neural models are powerful, but they don’t naturally follow strict logic unless forced heavily, and even then they drift.

That’s why Neuro-Symbolic AI exists. It anchors learning with reasoning so systems don’t drift away from reality.

How Neuro-Symbolic AI Actually Works

It’s not complicated in concept, but it’s powerful in execution. You basically stack two layers of intelligence.

Neural layer:

- Learns from data

- Handles language, images, patterns

- Deals with uncertainty

Symbolic layer:

- Applies rules

- Enforces constraints

- Validates outputs

Now when a request comes in, the system doesn’t just respond. It processes, checks, validates, and then outputs. So instead of one step, it becomes a chain of reasoning. That’s why Neuro-Symbolic AI feels more stable. It doesn’t just move forward blindly, it keeps checking itself.

Key Features That Actually Matter

Forget fancy terms, focus on what actually changes how things work.

Core capabilities:

- Combines learning with rule-based reasoning

- Produces explainable decisions

- Handles messy data while respecting structure

- Maintains consistency across repeated tasks

Behavior differences:

- Less randomness in outputs

- Better handling of edge cases

- Logical flow instead of guesswork

- Easier to debug when something goes wrong

Real Use Cases That Feel Practical

Healthcare

Healthcare cannot rely on guesswork. Every decision matters. Neural models help with pattern detection, but symbolic rules ensure safety.

- Diagnosis assistance

- Medical record validation

- Decision support systems

That’s where Neuro-Symbolic AI becomes useful, not just impressive.

Finance

Finance runs on rules. Compliance, audits, risk models. Pure neural systems can miss constraints.

- Fraud detection

- Risk scoring

- Financial reporting

Symbolic reasoning ensures every decision follows rules.

Customer Support

Most chatbots fail not because they are dumb, but because they don’t follow logic. They respond, but they don’t reason through policies. With AI chatbot development, adding reasoning changes everything:

- Answers follow company policies

- Edge cases handled properly

- Less escalation to human agents

Operations

Operations are full of repetitive but rule-heavy decisions.

- Approvals

- Task routing

- Process automation

This is where workflow automation becomes more reliable when logic is embedded.

Product and Systems

When AI becomes part of your product, stability matters more than creativity.

- Smart assistants

- Internal dashboards

- Decision engines

This is where AI integration becomes critical. The placement of reasoning matters more than model size.

Read More: Average Timeline and Budget for a FinTech App with Real-Time AI Fraud Detection

Comparison Table

# |

Aspect |

Neural AI |

Symbolic AI |

Neuro-Symbolic AI |

| 1 | Learning | High | Low | High |

| 2 | Reasoning | Low | High | High |

| 3 | Flexibility | High | Low | Balanced |

| 4 | Consistency | Medium | High | High |

| 5 | Explainability | Low | High | High |

| 6 | Business usability | Medium | Low | High |

Benefits You’ll Actually Notice

Operational:

- Fewer errors in workflows

- Consistent outputs across teams

- Faster execution of repetitive tasks

Strategic:

- Higher trust in AI systems

- Better decision-making support

- Easier scaling of expertise

Financial:

- Reduced rework

- Lower operational costs

- Better resource allocation

This is why Neuro-Symbolic AI slowly moves from experimental to essential.

Challenges That Show Up

Nothing is perfect, and this has its own friction.

Data:

- Needs structure and clarity

- Mixing rules with data is tricky

Tech:

- Integration is complex

- Requires expertise in both AI and logic

People:

- Teams need to understand new workflows

- Resistance to change is normal

Still, the payoff is worth it when systems start behaving reliably.

Read More: What is Spatial Intelligence? Examples, Uses, and Improvement Tips

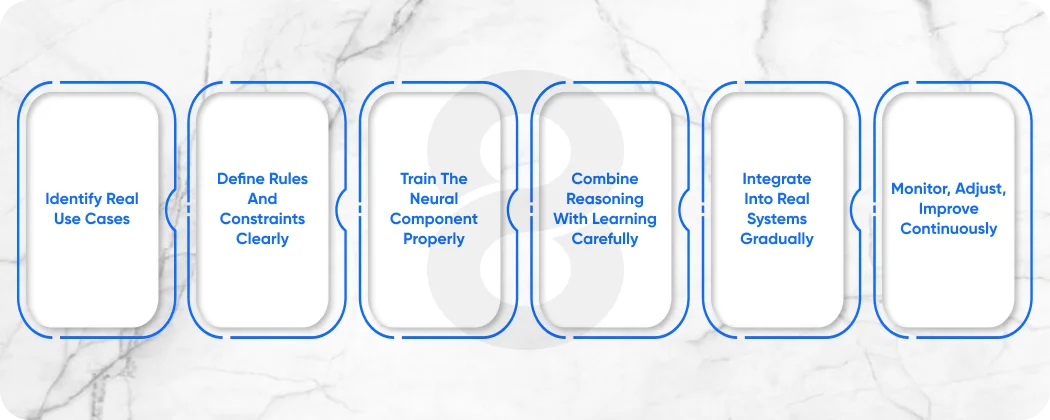

Implementation Strategy That Actually Works

Step 1: Identify real use cases

Don’t start with random experiments. Look at workflows where decisions depend on both data patterns and strict rules, like approvals, compliance checks, or structured customer interactions.

Step 2: Define rules and constraints clearly

Write down actual business logic. Not assumptions, real rules teams follow daily. If humans rely on it, your system needs it too. This becomes your symbolic layer.

Step 3: Train the neural component properly

Use domain-specific data, real examples, real workflows. The model should learn patterns that actually reflect how your business operates, not generic internet knowledge.

Step 4: Combine reasoning with learning carefully

Now connect both layers. Let the neural model generate outputs, but pass them through symbolic checks so rules are applied consistently before final decisions are made.

Step 5: Integrate into real systems gradually

Start small. Plug it into one workflow, one tool, one process. Test it with real users and real data before expanding across your system.

Step 6: Monitor, adjust, improve continuously

Collect feedback, track errors, refine both rules and data. Over time the system becomes more aligned, more stable, and more useful in everyday operations.

Read More: 10 AI Hallucination Examples and Their Root Causes

Where This Is All Going

The future is not just bigger models. That phase is already happening. The next phase is smarter systems. Systems that:

- Learn from data

- Follow rules

- Explain decisions

- Stay consistent over time

That’s why Neuro-Symbolic AI is being seen as the next layer, not a replacement, but an evolution.

Read More: AGI vs AI – Which Technology Drives Better Business Automation?

Stop Guessing and Start Building AI That Actually Makes Sense

Stop relying on systems that sound smart but break when things get real. Start building AI that follows your logic, respects your workflows, and actually supports your team instead of confusing it. When reasoning is built in, things stop feeling random and start feeling reliable. That’s when AI becomes something you trust, not something you test once and forget.

Conclusion

At some point, the goal shifts. It’s not about having the smartest model, it’s about having the most reliable system. Something that works every day, not just when conditions are perfect. Systems that only learn are powerful but unstable. Systems that only follow rules are stable but limited. When both come together, things finally balance out.

Decisions become clearer. Outputs make sense. Teams stop questioning everything and start relying on it naturally. That’s when AI stops being a tool you experiment with and starts becoming part of how work actually gets done.