Everyone gets impressed by AI at first. It writes, answers, explains, even sounds confident while doing it. Feels like it knows everything. And then one day, it says something completely wrong but still sounds very sure about it. That’s the weird part.

Not random nonsense, not obvious mistakes, just slightly off information that looks correct unless you already know the answer. That’s what makes it dangerous. This is where people start noticing AI hallucination examples in real workflows, not just experiments. You see it in reports, in chatbots, in automation systems, in places where accuracy actually matters. And suddenly the conversation changes. It’s not about how smart AI is, it’s about how reliable it is.

What Are AI Hallucinations

AI hallucination is when a model generates information that sounds correct but is actually false, misleading, or completely made up. The tricky part is tone. It doesn’t sound unsure. It sounds confident. That’s why AI hallucination examples are not always obvious. They blend into normal outputs, making them harder to detect. It’s not that the model is trying to lie. It just predicts the most likely answer based on patterns, even when it doesn’t truly “know.”

Read More: 10 Open-Source Small Language Models for Your Next Project

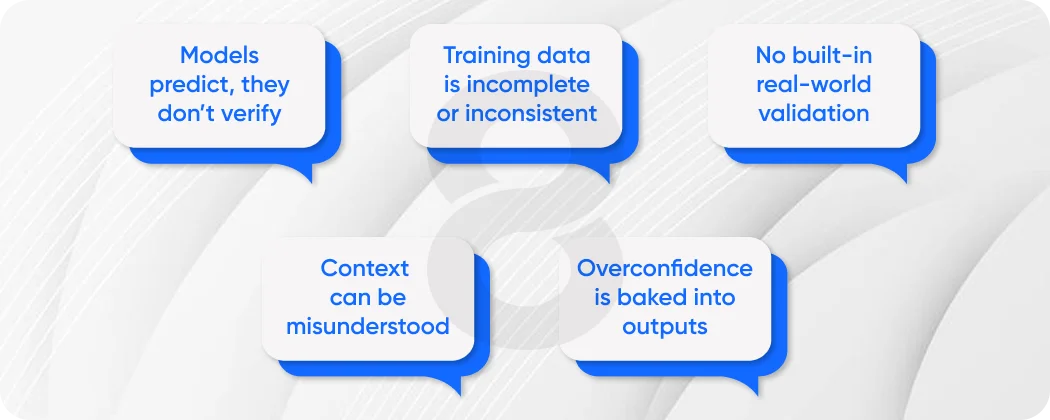

Why AI Hallucinations Happen

Before jumping into examples, you need to understand why this even happens.

- Models predict, they don’t verify

- Training data is incomplete or inconsistent

- No built-in real-world validation

- Context can be misunderstood

- Overconfidence is baked into outputs

This is why fixing hallucinations is not just about better prompts. It’s about system design.

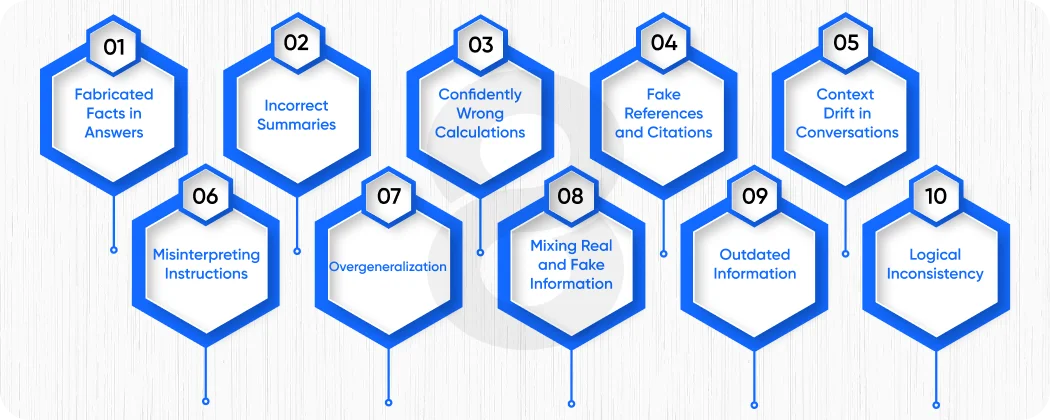

10 AI Hallucination Examples and Their Root Causes

1. Fabricated Facts in Answers

AI sometimes creates facts that don’t exist but sound realistic.

Example: A chatbot invents a statistic or study that was never published.

Root cause:

- Pattern completion instead of fact-checking

- Missing verification layer

This is one of the most common AI hallucination examples and also one of the hardest to catch.

2. Incorrect Summaries

You give a document, ask for a summary, and it slightly twists meaning.

Example: A legal summary changes the implication of a clause.

Root cause:

- Misinterpretation of context

- Overcompression of information

3. Confidently Wrong Calculations

AI gives numerical answers that look logical but are incorrect.

Example: Financial projections with wrong totals.

Root cause:

- Weak reasoning in math

- Lack of validation checks

4. Fake References and Citations

AI generates sources that don’t exist.

Example: Citing a research paper with a real-sounding title but no actual link.

Root cause:

- Pattern-based generation

- No database verification

5. Context Drift in Conversations

Long conversations slowly go off track.

Example: A chatbot starts mixing unrelated topics.

Root cause:

- Limited memory handling

- Context misalignment

This shows up a lot in AI chatbot development when conversations go beyond simple queries.

6. Misinterpreting Instructions

AI misunderstands what the user actually wants.

Example: You ask for analysis, it gives a summary.

Root cause:

- Ambiguous prompts

- Weak intent recognition

7. Overgeneralization

AI applies a rule too broadly.

Example: Assuming all businesses follow the same process.

Root cause:

- Pattern bias

- Lack of domain-specific constraints

8. Mixing Real and Fake Information

Half correct, half invented output.

Example: Correct concept but wrong explanation attached.

Root cause:

- Partial knowledge blending

- No validation layer

9. Outdated Information

AI gives answers that are no longer accurate.

Example: Old pricing, outdated policies.

Root cause:

- Static training data

- No real-time updates

10. Logical Inconsistency

AI contradicts itself within the same response.

Example: Gives two conflicting statements.

Root cause:

- Lack of reasoning layer

- No consistency checks

This is where systems without structured reasoning struggle the most, leading to repeated AI hallucination examples across workflows.

Read More: The ‘Decision Trace’ Protocol – Building Audit-Ready AI Agents for Regulated Industries

Root Causes Breakdown Table

# |

Problem Type |

Root Cause |

Impact |

| 1 | Fabricated facts | Pattern prediction | Misinformation |

| 2 | Wrong summaries | Context misreading | Miscommunication |

| 3 | Calculation errors | Weak reasoning | Financial risk |

| 4 | Fake citations | No verification | Loss of trust |

| 5 | Context drift | Memory limits | Poor UX |

| 6 | Misinterpretation | Prompt ambiguity | Wrong outputs |

| 7 | Overgeneralization | Bias in training | Incorrect decisions |

| 8 | Mixed info | Partial learning | Confusion |

| 9 | Outdated data | Static knowledge | Irrelevance |

| 10 | Inconsistency | No reasoning layer | Unreliable system |

Why This Matters in Real Systems

Hallucinations are not just annoying, they break trust.

- Teams stop relying on AI

- Outputs require manual checking

- Automation slows down instead of speeding up

This is why AI integration needs to include validation layers, not just models.

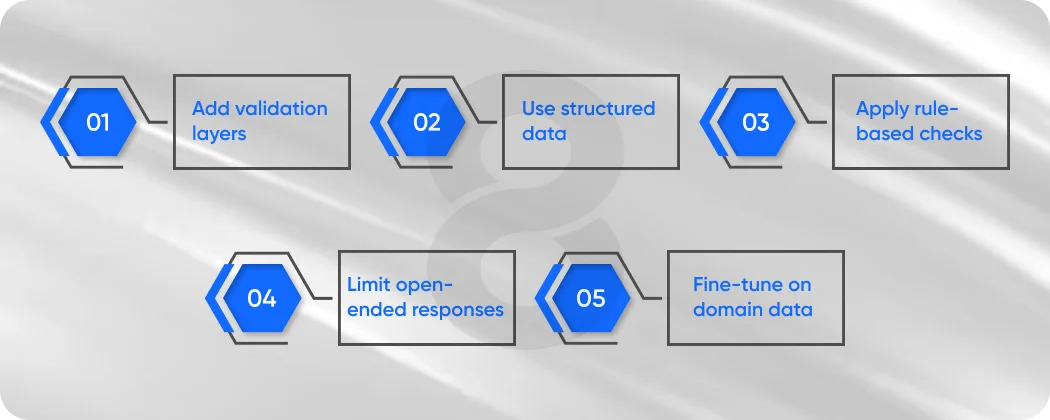

How to Reduce AI Hallucinations

You don’t eliminate them completely, but you reduce them significantly.

Key Strategies

- Add validation layers

- Use structured data

- Apply rule-based checks

- Limit open-ended responses

- Fine-tune on domain data

This is where combining systems matters more than just scaling models.

Read More: How to Predict and Manage the “Token Tax” in High-Scale Generative AI Applications

Practical Fixes That Actually Work

In Chatbots

With AI chatbot development, you can:

- Restrict responses to knowledge bases

- Add fallback responses

- Use context validation

In Automation

With workflow automation:

- Add checkpoints

- Validate outputs before execution

- Use rule-based triggers

In Development

With AI development:

- Combine models with logic systems

- Use retrieval-based methods

- Monitor outputs continuously

Read More: Leveraging Model Context Protocol to Connect AI Agents Across Salesforce, Slack, and SAP

Stop Letting AI Guess and Start Making It Think

Most systems fail not because AI is weak, but because they let it guess everything without structure. If you want reliable outputs, you need systems that guide decisions, validate responses, and align with your workflows. Build AI that follows rules, understands context, and actually supports your team instead of creating more work. That’s when things start working properly.

Future Direction

The future is not just smarter AI, it’s more controlled AI.

Systems will:

- Combine reasoning with learning

- Reduce hallucinations through validation

- Become more predictable over time

This is where structured approaches start replacing pure generation systems.

Conclusion

At some point, the excitement around AI settles down and reality kicks in. It’s not about how impressive it looks in a demo, it’s about whether it actually works when people depend on it daily.

Hallucinations are not rare edge cases, they are part of how these systems behave when left unchecked. And once you see it happening in real workflows, you can’t unsee it. Reports feel unreliable, chatbots feel inconsistent, automation starts needing supervision again. That’s when the value starts dropping.

But the fix is not to stop using AI. It’s to use it properly. Add structure. Add validation. Build systems that guide outputs instead of just generating them. When you combine learning with control, things start making sense again.

The shift is subtle but important. Instead of asking what AI can do, you start asking how it should behave inside your workflows. That’s where real value shows up.